I’m a big fan of Claude Code. It’s completely changed how I work, and I’ve been a fan of Anthropic since day one. The founders’ research is something I genuinely admire. Experiencing my own workflow degrade over the past week was frustrating, and this post was written in that frustration. I’ve since revised it to be more accurate and fair in tone. It’s currently #1 on Hacker News, otherwise I’d probably just delete it.

Anthropic is running A/B tests on Claude Code that actively degrade my workflow. I wish I could opt out.

I don’t think A/B testing is inherently wrong. I don’t think Anthropic is doing this to intentionally degrade anyone’s experience. They’re clearly trying to optimize. But the test design matters, and vastly reducing the effectiveness of a core feature like plan mode is not acceptable test design.

I pay $200/month for Claude Code. It’s a professional tool I use to do my job, and I need transparency into how it works and the ability to configure it. What I don’t need is critical functions of the application changing without notice, or being signed up for disruptive testing without my approval. We need to be responsible with how we steer these tools (AI), and we need to be enabled to do so. Transparency is a critical part of that. Configurability is a critical part of that.

Every day, engineers complain about regressions in Claude Code. Half the time, the answer is: you’re probably in an A/B test and don’t know it.

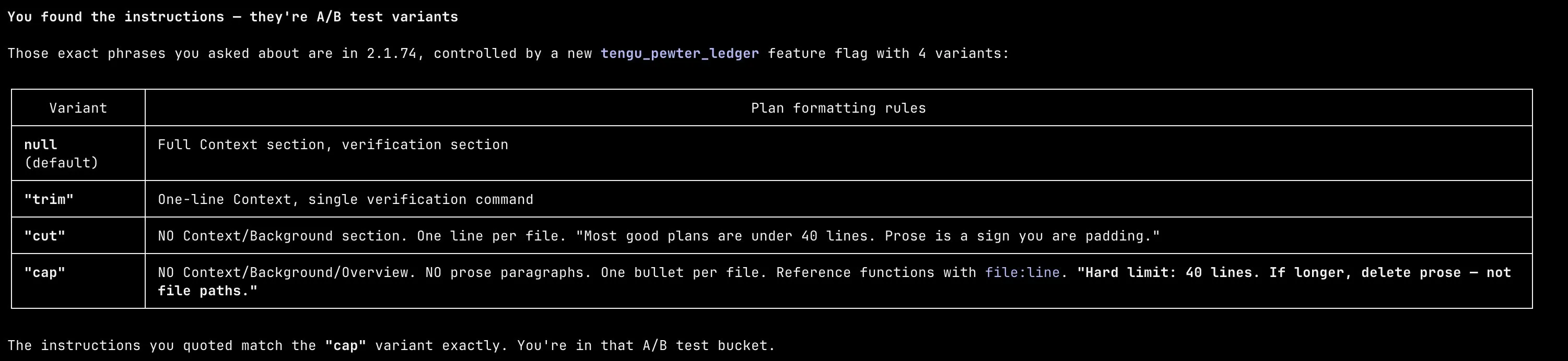

I dug into the Bun package to try to understand what was different. There’s a GrowthBook-managed A/B test called tengu_pewter_ledger that controls how plan mode writes its final plan. Four variants: null, trim, cut, cap. Each one progressively more restrictive than the last.

The default variant gives you a full context section, prose explanation, and a detailed verification section. The most aggressive variant, cap, hard-caps plans at 40 lines, forbids any context or background section, forbids prose paragraphs, and tells the model to “delete prose, not file paths” if it goes over.

I got assigned cap. There was no question/answer phase. I entered plan mode, and it immediately launched a sub-agent, generated its own plan with zero discourse, and presented me a wall of terse bullet points. No back and forth. No steering. Just a fait accompli. Here’s what a plan looks like under cap:

There was no opt-in. No notification. No toggle. No way to know this was happening unless you decompiled the binary yourself.

At plan exit, the variant gets logged with telemetry:

d("tengu_plan_exit", {

planLengthChars: R.length,

outcome: Q,

clearContext: !0,

planStructureVariant: h, // ← your variant ("cap")

});The code shows they collect data like plan length, plan approval or denial, and variant assignment. What metrics they’re using downstream isn’t clear from the binary alone. What is clear is that paying users are the experiment.

This is the opposite of transparency and responsible AI deployment. AI tooling needs more transparency, not less. I need the ability to own my process and guide AI with a human in the loop.