I have deliberately tried not to write too much about AI, because the signal gets swamped by the noise. But I think the picture is becoming clearer now. This week on The Next Wave, I’m going to re-publish versions of posts originally on my newsletter, Just Two: one from last summer, and one that goes live this week.

—-

Just by way of a thought experiment: what if the current surge in the bunch of technologies that goes under the label of ‘AI’ isn’t the beginning of a whole new technology surge, but is actually the final stage of the digital surge that started in the 1970s and accelerated at the turn of the century?

I’ve been wondering this for a while in a vague kind of a way because I haven’t been able to see the business model that supports the huge investment in AI in the USA. (I’ve written about this before on here.)

This is a long way in to couple of pieces by Nicolas Colin, the strategy and innovation blogger, who has been wondering the same thing, but a lot more coherently. He calls this ‘late cycle investment theory’.

The Perez model

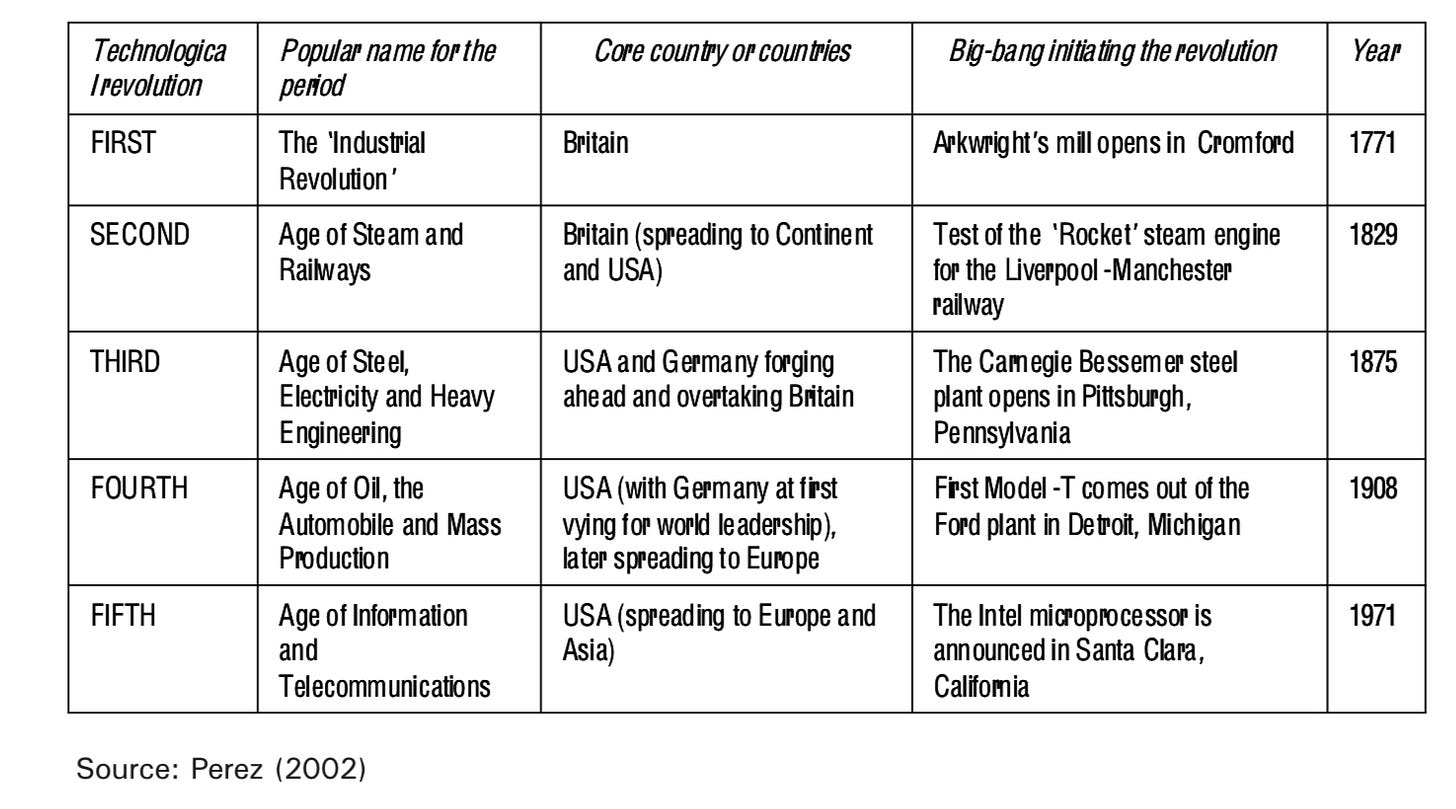

Like me, he is a fan of the work of the academic Carlota Perez, who built on the work of Christopher Freeman to develop a model of how technology and finance interacted to create new long surges of investment, starting with canals and cotton, that run for 50-60 years. (She calls them ‘surges’ because unlike ‘waves’ each technology embeds itself in the society and its infrastructure.)

The two most recent surges are a cars/oil surge, which started in 1908, and the Information and Communications Technology, which started in 1971.

I’m not going into all of the theory of the Perez model here—it’s online if you want to do that, and I have written about it elsewhere—but the relevant point for the present discussion is that it follows an S-curve, and the first half is slow going, as new infrastructure is ‘installed’, and some of it is below the radar. The internet was a closed academic network for most of the first part of its S-curve.

From infrastructure to ‘deployment’

Halfway through, after a lot of infrastructure has been built out, and usually following a financial crash in which some of the investors in that infrastructure lose their shirts, ‘deployment’ companies take over, with actual customers and business models, and have an accelerated period of growth, before they hit market limits and turn into ordinary businesses. And the investors who have made large returns from that period of growth start looking elsewhere—for the technologies that will make the next surge.

The reason I like Perez’s version is that her model has had a lot of explanatory power over the last 25 years as I have watched the evolution of the tech sector.

That’s a long way into Colin’s argument, and let me quote from his first article directly:

Seen through a late-cycle lens, today’s markets show signs that we’ve entered the maturity phase of the computing and networks revolution. The theory, therefore, leads to specific, testable predictions about where capital should go and which strategies will outperform.

Three indicators

He points to three indicators from the tech sector that support this observation that we’re in the ‘late cycle’:

- The startup funding collapse of 2022 wasn’t just a correction—it may be structural. As investor Jerry Neumann argued in his landmark Productive Uncertainty, startups rely on uncertainty as a competitive edge. When good ideas become obvious to everyone—including well-funded incumbents—the startup model faces real strain.

- Then came AI, revealing new dynamics. ChatGPT’s breakthrough didn’t come from a garage startup but from OpenAI, backed by Microsoft’s vast computing power. Google, Meta, and Amazon responded with billions. This pattern—big tech deploying huge capital against well-understood problems—fits the late-cycle theory exactly.

- Most tellingly, platform saturation now looks almost complete. Digital transformation has reached most sectors where computing and networks can plausibly work. What remains—healthcare delivery, education, construction, government services—may reflect the paradigm’s natural limits, not untapped markets. [His emphasis]

Optimising the existing system

In the second article —some behind a paywall—he looks specifically at the way AI is being deployed, and I’m going to quote/paraphrase quickly the visible bits of this here.

Late-cycle investment theory suggests AI is the efficiency breakthrough of the computing and networks era, not the start of a new one. Just as lean production refined mass production in the 1970s without replacing it, AI optimises the existing paradigm rather than creating a new one.

Colin’s done a lot of analysis here, and he’s assembled quite a lot of evidence which he shares. I’m not going to spend a lot of time on this, because I’m more interested in the bigger strategic questions that get raised if he is right.

What a new technology surge looks like

But it’s worth summarising some of the observations. First, that at the start of a new technology surge, you don’t know it’s happening. You understand the decisive moment afterwards, the moment at which an innovation transformed the cost structure (the Spinning Jenny, Watt’s condensing engine, the Ford production line, the microprocessor). But with AI, the moment was very visible, to the point of being choreographed.

Second, the amount of capital investment is off the scale. At the early stage of a surge, investment tends to be patchy and not fully understood—the sector exists but it is not completely legible yet.

And third, Colin suggests that AI allows computing to reach sectors that have in some ways resisted it:

Like lean production, which extended mass production’s dominance for decades through efficiency gains, AI doesn’t mark computing’s end but its maturation. The technology spreads to previously untouchable sectors, creating the illusion of radical novelty whilst actually representing computing and networks’ final conquest of the physical economy.

Late deployment

It’s worth pausing here. Although Perez dates the end of each of her surges from the date of the innovation that makes the next surge, possible, there’s a kind of ‘late deployment’ stage in the old surge while the new one is still in its early stages of development.

Late deployment: So although the ICT surge dates from 1971, much of the final innovation in the cars/oil surge also dates from then. In the UK at that time, there’s still a huge roadbuilding programme of motorways and ring-roads, and these then made possible the emergence of long-distance logistics, big-box out of town retailing, and edge of town business parks. Colin’s arguing that AI is the equivalent of bigger roads and big box retail—different, but more about embedding the technology more deeply than the kind of transformational change that eventually causes a new and distinctive form of abundance.

There’s also social pushback—in the UK the campaigns against big ringroad schemes started in the late 1960s and early 1970s. And perhaps we’re seeing some of that about AI. The U.S. map of local pushback against data centres from Data Center Watch covers the whole of the country, in red states and blue. People seem to hate Google’s inserting of AI tools into its search results, and hate even more that it is all but impossible to turn it off. This doesn’t speak to an exciting technology that is being embraced by its users. A note by Ted Gioia on his music blog says that:

Most people won’t pay for AI voluntarily—just 8% according to a recent survey. So [tech companies] need to bundle it with some other essential product.

Or as Ed Zitron noted recently of Notion:

Notion bumped its Business Plan from $15 to $20-a-month per user thanks to its new “AI features,” which I imagine sucked for previous business subscribers who didn’t want “AI agents” or any of that crap but did want things like Single Sign On and Premium Integrations. The result? Profit margins dropped by 10%. Great job everybody!

Normal returns

This matters for a couple of reasons. In the first place, late stage post-deployment technologies do produce returns on investment, but they’re normal returns, not increasing returns.

But in the second place it sheds a different light on what amounts to a ‘business model war’ going on between China and the United States at the moment through their different approaches to AI.

I think we know plenty about the American model. It is fuelled by a transhumanist ideology that is just this side of The Rapture, as Sam Altman of OpenAI reminds people every week of the year.

The Chinese model of AI

As the Exponential View newsletter explained on Sunday, quoting the policy organisation RAND, the Chinese model is completely different:

In Washington, the AI policy discourse is sometimes framed as a ‘race to AGI.’ In contrast, in Beijing, the AI discourse is less abstract and focuses on economic and industrial applications that can support Beijing’s overall economic objectives.

Azeem Azhar of EV added some gloss:

Chinese teams… publish leaner open-source architectures and partner with specialists in areas such as healthcare analytics (Yidu Tech) and adaptive learning (Squirrel AI).

This is partly driven by constraints: China has far less computing power than the US, and needs to build lean. This also means that its model is far more exportable. But the important point here is that if AI is a late-stage technology and not the next large surge of innovation, the Chinese model matches the moment. Perhaps we shouldn’t be surprised: unlike most countries, a third of the full members of China’s Central Committee are technocrats.

—

Now read on:

—

This is a slightly updated version of this article that was first published on my Just Two Things Newsletter.