16th April 2026

For anyone who has been taking my pelican riding a bicycle benchmark seriously as a robust way to test models, here are pelicans from this morning’s two big model releases—Qwen3.6-35B-A3B from Alibaba and Claude Opus 4.7 from Anthropic.

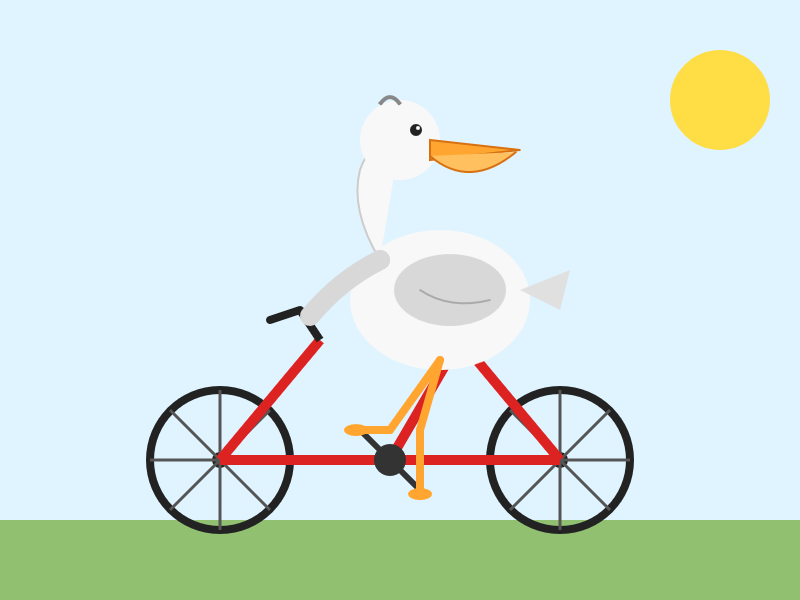

Here’s the Qwen 3.6 pelican, generated using this 20.9GB Qwen3.6-35B-A3B-UD-Q4_K_S.gguf quantized model by Unsloth, running on my MacBook Pro M5 via LM Studio (and the llm-lmstudio plugin)—transcript here:

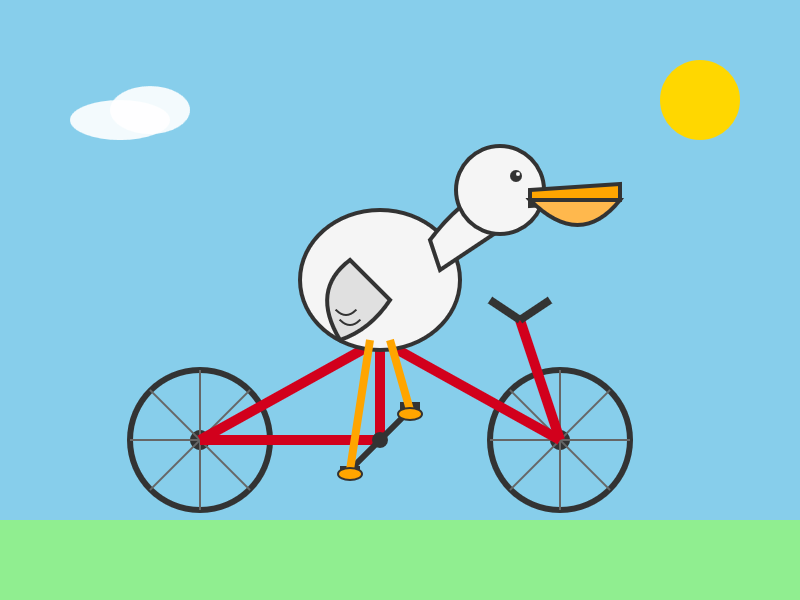

And here’s one I got from Anthropic’s brand new Claude Opus 4.7 (transcript):

I’m giving this one to Qwen 3.6. Opus managed to mess up the bicycle frame!

I tried Opus a second time passing thinking_level: max. It didn’t do much better (transcript):

I don’t think Qwen are cheating

A lot of people are convinced that the labs train for my stupid benchmark. I don’t think they do, but honestly this result did give me a little glint of suspicion. So I’m burning one of my secret backup tests—here’s what I got from Qwen3.6-35B-A3B and Opus 4.7 for “Generate an SVG of a flamingo riding a unicycle”:

I’m giving this one to Qwen too, partly for the excellent <!-- Sunglasses on flamingo! --> SVG comment.

What can we learn from this?

The pelican benchmark has always been meant as a joke—it’s mainly a statement on how obtuse and absurd the task of comparing these models is.

The weird thing about that joke is that, for the most part, there has been a direct correlation between the quality of the pelicans produced and the general usefulness of the models. Those first pelicans from October 2024 were junk. The more recent entries have generally been much, much better—to the point that Gemini 3.1 Pro produces illustrations you could actually use somewhere, provided you had a pressing need to illustrate a pelican riding a bicycle.

Today, even that loose connection to utility has been broken. I have enormous respect for Qwen, but I very much doubt that a 21GB quantized version of their latest model is more powerful or useful than Anthropic’s latest proprietary release.

If the thing you need is an SVG illustration of a pelican riding a bicycle though, right now Qwen3.6-35B-A3B running on a laptop is a better bet than Opus 4.7!