Ethan Mollick has been writing about AI adoption in organizations for a while now. In Making AI Work: Leadership, Lab, and Crowd, he makes the point that individual productivity gains from AI do not automatically become organizational gains. People may get faster, write better, analyze more, automate more, or quietly become cyborg versions of themselves. The company may still learn almost nothing.

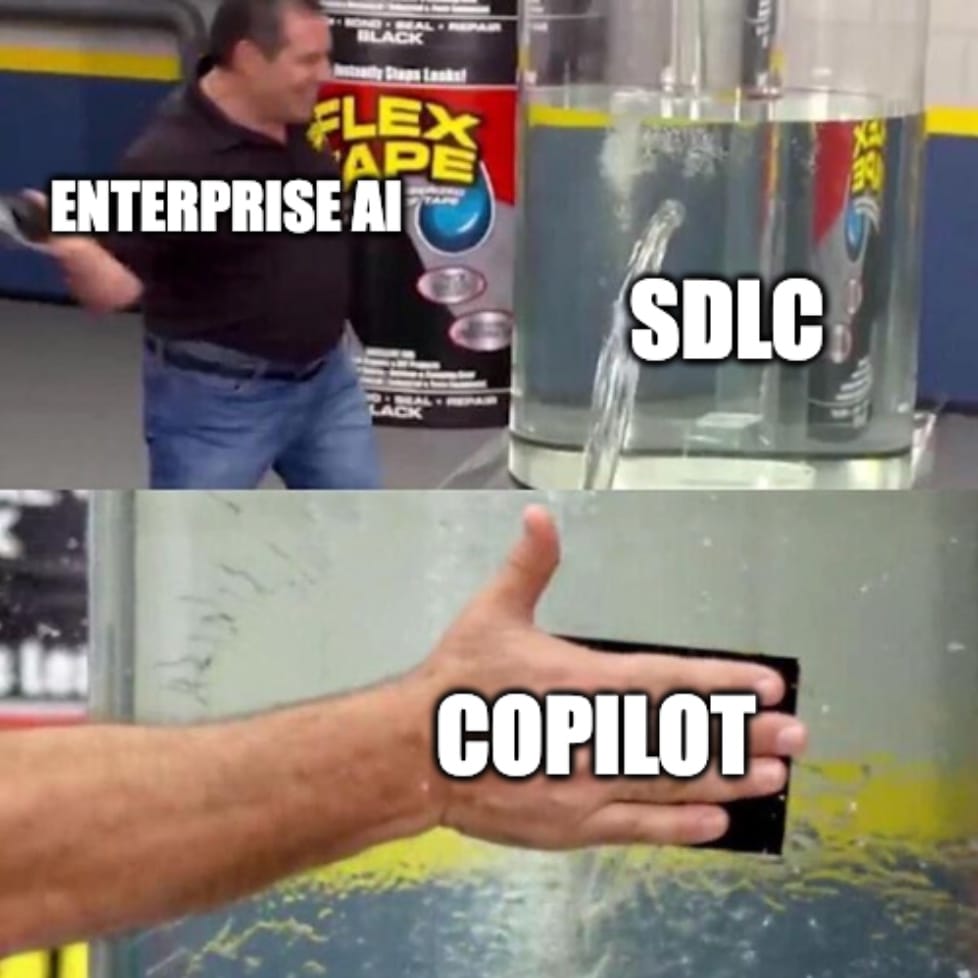

A lot of companies are now entering the phase where GitHub Copilot licenses are provisioned, ChatGPT Enterprise exists somewhere in the stack, Claude or Gemini or Cursor show up in pockets, and every team has at least one person who is much further along than the official enablement material assumes. Some of this is visible, yet much of it is not. Management sees license usage (“Where is the ROI for the 2 mio € we paid Anthropic last year?”), maybe prompt counts, maybe a survey, maybe a few internal PoCs that feel encouraging enough to put into a steering committee deck. In other companies, AI went straight to IT and died.

I think everyone knows this is the phase where it gets complicated, like, really complicated. The “messy middle” of AI adoption starts when AI use is everywhere, uneven, partially hidden, difficult to compare, and not yet connected to organizational learning.

Everyone has Copilot now

The first phase of AI adoption is (mostly) comfortable because it looks like other enterprise rollouts. You buy seats. You define acceptable use. You run training. You create a champion network. You ask people to share use cases in a Teams channel, which will briefly look alive and then become one more corporate attic full of good intentions.

The second phase is much stranger: one team uses Copilot as autocomplete and calls it a day. Another team runs Claude Code in tight loops, with tests, reviews, and constant steering. A product owner suddenly prototypes real software instead of mocking screens in Figma. A senior engineer delegates a root-cause analysis to an agent and comes back to the valid solution in under an hour; this would’ve taken him two weeks without AI. A junior person produces polished code but has no idea which architectural assumptions got smuggled into the system. A support team quietly turns recurring tickets into workflow automation, because they know exactly where the work hurts and nobody in the Center of Excellence ever asked the right question.

All of these things can happen in the same company at the same time. That is what makes the messy middle messy: the adoption unit is no longer the organization, and maybe not even the team. It is the loop inside the work!

Mollick’s Leadership, Lab, and Crowd frame is useful here. Leadership sets direction and permission, The Crowd discovers use cases because the Crowd does the actual work. The Lab turns those discoveries into shared practices, tools, benchmarks, and new systems. But the part I keep getting stuck on is the same one that shows up in agentic engineering again and again: how does the learning actually travel?

The old change machinery is too slow for this

Most companies will try to process AI adoption through the machinery they already have. Communities of practice, brown-bag sessions, champion networks, enablement decks, office hours, monthly demos, surveys, maybe a dashboard. Fair enough, I did it, you did it. Some of that helps, especially in organizations that still need permission to experiment at all.

But the interesting AI work does not wait for the next community meeting. It appears inside a code review, a sales proposal, a research task, a product prototype, a production incident, a test strategy, a compliance question. Or when someone figures out that for a certain class of product components, they can set up something close to a dark factory: write the intent, let the agent run a very loose loop, apply enough backpressure to keep it on track, evaluate the outcome against strong scenarios, refine the intent, and repeatedly get high-quality results. By the time the story is cleaned up enough to become a best-practice slide, the important learning has often lost its teeth. What made it useful was the friction: the missing context, the test that failed, the weird API behavior, the moment where the agent sprawled into nonsense and someone had to pull it back.

I have been thinking about this through the same lens as the elastic loop. AI collaboration is not one mode! It stretches from tight, synchronous co-driving to looser, asynchronous delegation. The adoption question is not simply “are people using AI?” It is whether teams know which loop size to use, where they need resistance, which artifacts should survive the loop, and how those artifacts become something the organization can learn from.

That is a much harder question than tool usage or bean (token) counting.

Scrum was built for expensive iteration

I argued that much of modern software process exists because human iteration used to be expensive. Sprint planning, estimation, standups, user stories, ticket grooming, handoffs, all the ceremony around coordination and risk reduction. Reasonable, given the constraints. If a single iteration takes days or weeks, you need structures that prevent people from wasting too many of them.

But agentic engineering changes the economics: It makes more options materializable! It lets teams move from intent to prototype to evaluation much faster. It lets product people see working software earlier. It lets engineers test more hypotheses before committing. It does not magically make delivery easy, but it moves the constraint away from implementation and toward intent, verification, judgment, and feedback.

The awkward thing is that many organizations spent twenty years calling themselves agile while preserving the organizational reflexes agile was supposed to remove. Now AI makes real agility more plausible, and the system still asks for two-week sprint commitments, handoff documents, and all the stuff that assumes iteration is scarce.

That is the ceremony graveyard again, but now at adoption level. The loop can move faster than the organization can metabolize what the loop learned.

The open bar will not stay open forever

There is another pressure building underneath all this. AI usage will become more visibly metered. The current enterprise feeling of “everyone has access, don’t worry too much about the bill” will not hold forever, at least not in the form people are getting used to. Model routing, token budgets, usage-contingent pricing, inference costs, governance around which model is allowed for which task: all of that will become more explicit as companies move from casual assistance to serious agentic work.

I do not want to make this a cost panic story, that would be the least interesting way to think about “rented intelligence”. The question is not how to minimize token spend in the abstract, any more than the question of software delivery was ever how to minimize keystrokes.

But the bill will force a better question: what changed because we spent those tokens?

Please, I beg you, don’t count pull requests. Better: Which loops closed faster? Which decisions improved? Which root-cause analyses got sharper? Which reviews caught more? Which teams learned reusable patterns? Which product ideas were killed earlier because a prototype made the weakness obvious? Where did AI create learning, and where did it just create more output?

Token-to-output is the old measurement reflex in a new costume. Token-to-learning is closer to the thing that matters.

Loop Intelligence is the missing feedback path

I keep coming back to three capabilities companies will need in the messy middle.

- Agent Operations: which agents and AI tools are running, what systems they can touch, which data they can see, which actions require approval, where identity, audit, permissions, and runtime visibility live. This is the control side, and it matters because agentic work eventually touches real systems.

- Loop Intelligence: which AI-assisted (or fully agentic) loops actually produce learning, which ones stay open, which ones decay, where agents create leverage, where they sprawl into side quests, which teams are stuck in tight supervision because they lack tests, context, or intuition. Which teams are ready for looser delegation.

- Agent Capabilities: how useful capabilities get distributed across the organization without pretending that three monolithic agents can do everyone’s work. AI is starting to behave more like a fluid base technology than a single application category. It does not fit cleanly into one “HR agent,” one “engineering agent,” one “sales agent,” each sitting somewhere in the enterprise zoo. The better question is how capabilities flow into the places where work happens: employee harnesses, background agents, product teams, platform services, local skills, MCP servers, evaluation suites, runbooks, examples, and domain-specific procedures.

This is where the platform question gets interesting. Who owns these capabilities? How does a useful agent skill discovered in one team become available to others without turning into a dead template? How do you enrich a developer’s harness differently from a product person’s harness, a support team’s background agent, or a compliance workflow? Which capabilities belong close to the team, which belong in a platform layer, and which should never be generalized because the local context is the whole point?

One without the others gets weird quickly. Agent Operations without Loop Intelligence becomes control bureaucracy. Loop Intelligence without Agent Capabilities becomes an analytics layer that discovers useful patterns but has no way to feed them back into work. Agent Capabilities without Operations and Loop Intelligence becomes tool sprawl with better branding. We can all have nice charts these days, no need to ask the IT department to build a dashboard anymore, right?

The control path, the learning path, and the capability path have to meet somewhere.

That somewhere is what I have been calling a feedback harness internally. I am not sure I like the term for customers. It sounds too much like something from an architecture diagram, and customers do not buy harnesses because the mechanism is elegant, even if it’s the thing of the year. They buy confidence, better decisions, faster learning, less waste, safer delegation.

So the more useful customer-facing concept might be a Loop Intelligence Hub.

A feedback harness listens to real work loops: tasks, prompts, specifications, reviews, scenarios, accepted and rejected hypotheses, production signals, rework, human decisions and interventions. Not to watch people, but to understand the loop. A first version does not have to be a giant platform. Pick a few real workflows, instrument the points where intent, agent work, verification, and human decision already leave traces, collect enough qualitative feedback to understand why a loop worked or failed, and turn that into a recurring learning artifact.

A Loop Intelligence Hub turns those signals into something the organization can act on: an enablement backlog, a capability radar, investment briefs, governance gaps, reusable workflows, training needs, evaluation priorities. No one-size-fits-all dashboards, customized to what’s relevant. The interesting output is not the dashboard anyway. It is the decision that follows: this team needs better backpressure before it can delegate more (stretch the loop), this product group has a repeatable dark-factory pattern for a narrow class of components, this compliance workflow needs a governed tool boundary, this skill should move into the platform because five teams have reinvented it badly.

The harness collects and the hub helps the organization decide. The capability layer feeds the learning back into work.

This cannot become employee surveillance

The whole thing dies if it turns into employee scoring.

If people believe the organization is measuring whether they used enough AI, they will game the signals. If they believe every experiment becomes a productivity expectation, they will hide the experiments. If they believe their best workflow will simply become their new baseline workload, they will keep it private. The company will get the worst possible version of adoption: visible compliance and invisible learning.

This is why the honest intent (not just the framing) is really important here. The useful question can’t be “who uses AI enough?” but: where did AI change the work in a way the organization can learn from? Which loops became healthier? Which teams need better backpressure before they can delegate more? Where does a product team need a different environment because prototypes are becoming real software?

You can write policies about this, and you probably should. But governance, like learning, only becomes real through use. Once the agent touches production-adjacent work, once a product person prototypes instead of specifying, once a developer delegates root-cause analysis, once token spend becomes large enough that management wants answers, the organization discovers whether it built a learning system or just bought a lot of seats.

The messy middle is not a phase to survive

The first phase of AI adoption was about access. Who gets the tools, who has permission, who negotiates the contracts, who can try the latest model without filing a procurement ticket. That phase still matters, but it will not differentiate for long. Access to frontier intelligence can be rented. Operational control and organizational learning cannot be rented in the same way.

The next advantage is learning velocity.

Who finds the real patterns faster? Who moves discoveries from individuals to teams to organizational capabilities? Who builds backpressure into agentic loops, so agents can’t sprawl? Who distributes useful agent capabilities without turning them into monolithic enterprise agents that fit nobody? Who finally uses agentic engineering to make agile real, instead of just slapping AI onto the old ceremonies?

Nobody has this figured out yet, I certainly do not. I have been iterating on the elastic loop for months, and every customer conversation, every internal discussion, every strange example from real work reshapes it again. That is the point! We will not understand this shift by waiting for a definitive adoption playbook from a vendor, a consultant, or an AI lab. We will understand it by instrumenting the work, sharing the messy learnings, letting others poke holes, and iterating in the open.