Machine learning (ML) has the potential to advance the state of the art in technical writing. No, I’m not talking about text generation models like Claude, Gemini, LLaMa, GPT, etc. The ML technology that might end up having the biggest impact on technical writing is embeddings.

Building intuition about embeddings

Here’s an overview of how you use embeddings and how they work. It’s geared towards technical writers who are learning about embeddings for the first time.

Input and output

Someone asks you to “make some embeddings”. What do you input? You input text.1 You don’t need to provide the same amount of text every time. E.g. sometimes your input is a single paragraph while at other times it’s a few sections, an entire document, or even multiple documents.

What do you get back? If you provide a single word as the input, the output will be an array of numbers like this:

[-0.02387, -0.0353, 0.0456]

Now suppose your input is an entire set of documents. The output turns into this:

[0.0451, -0.0154, 0.0020]

One input was drastically smaller than the other, yet they both produced an array of 3 numbers. Curiouser and curiouser. (When you work with real embeddings, the arrays will have hundreds or thousands of numbers, not 3. More on that later.)

Here’s the first key insight. Because we always get back the same amount of numbers no matter how big or small the input text, we now have a way to mathematically compare any two pieces of arbitrary text to each other.

But what do those numbers MEAN?

1 Some embedding models are “multimodal”, meaning you can also provide images, videos, and audio as input. This post focuses on text since that’s the medium that we work with the most as technical writers. Haven’t seen a multimodal model support taste, touch, or smell yet!

First, how to literally make the embeddings

The big service providers have made it easy. Here’s how it’s done with Gemini:

import google.generativeai as gemini

gemini.configure(api_key='…')

text = 'Hello, world!'

response = gemini.embed_content(

model='models/text-embedding-004',

content=text,

task_type='SEMANTIC_SIMILARITY'

)

embedding = response['embedding']

The size of the array depends on what model you’re using. Gemini’s text-embedding-004 model returns an array of 768 numbers whereas Voyage AI’s voyage-3 model returns an array of 1024 numbers. This is one of the reasons why you can’t use embeddings from different providers interchangeably. (The main reason is that the numbers from one model mean something completely different than the numbers from another model.)

Does it cost a lot of money?

No.

Is it terrible for the environment?

I don’t know. After the model has been created (trained), I’m pretty sure that generating embeddings is much less computationally intensive than generating text. But it also seems to be the case that embedding models are trained in similar ways as text generation models2, with all the energy usage that implies. I’ll update this section when I find out more.

2 From You Should Probably Pay Attention to Tokenizers: “Embeddings are byproduct of transformer training and are actually trained on the heaps of tokenized texts. It gets better: embeddings are what is actually fed as the input to LLMs when we ask it to generate text.”

What model is best?

Ideally, your embedding model can accept a huge amount of input text,

so that you can generate embeddings for complete pages. If you try to

provide more input than a model can handle, you usually get an error.

As of October 2024 voyage-3 seems to the clear winner in terms of

input size3:

For my particular use cases as a technical writer, large input size is an important factor. However, your use cases may not need large input size, or there may be other factors that are more important. See the Massive Text Embedding Benchmark (MTEB) leaderboard.

3 These input limits are based on tokens, and each service calculates tokens differently, so don’t put too much weight into these exact numbers. E.g. a token for one model may be approximately 3 characters, whereas for another one it may be approximately 4 characters.

4 Previously, I incorrectly listed this model’s input limit as 3072. Sorry for the mistake.

Very weird multi-dimensional space

Back to the big mystery. What the hell do these numbers MEAN?!?!?!

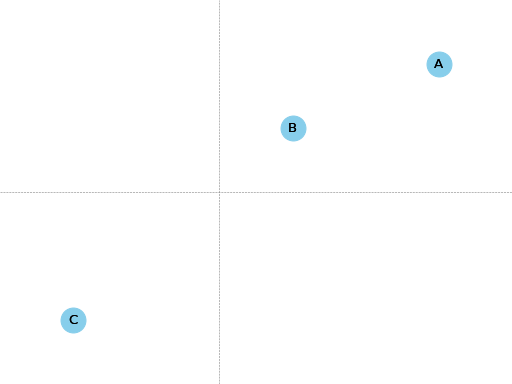

Let’s begin by thinking about coordinates on a map. Suppose I give you three points and their coordinates:

Point |

X-Coordinate |

Y-Coordinate |

|---|---|---|

A |

3 |

2 |

B |

1 |

1 |

C |

-2 |

-2 |

There are 2 dimensions to this map: the X-Coordinate and the Y-Coordinate. Each point lives at the intersection of an X-Coordinate and a Y-Coordinate.

Is A closer to B or C?

A is much closer to B.

Here’s the mental leap. Embeddings are similar to points on a map. Each number in the embedding array is a dimension, similar to the X-Coordinates and Y-Coordinates from earlier. When an embedding model sends you back an array of 1000 numbers, it’s telling you the point where that text semantically lives in its 1000-dimension space, relative to all other texts. When we compare the distance between two embeddings in this 1000-dimension space, what we’re really doing is figuring out how semantically close or far apart those two texts are from each other.

The concept of positioning items in a multi-dimensional space like this, where related items are clustered near each other, goes by the wonderful name of latent space.

The most famous example of the weird utility of this technology comes from the Word2vec paper, the foundational research that kickstarted interest in embeddings 11 years ago. In the paper they shared this anecdote:

embedding("king") - embedding("man") + embedding("woman") ≈ embedding("queen")

Starting with the embedding for king, subtract the embedding for man,

then add the embedding for woman. When you look around this vicinity of the

latent space, you find the embedding for queen nearby. In other words,

embeddings can represent semantic relationships in ways that feel intuitive

to us humans. If you asked a human “what’s the female equivalent

of a king?” that human would probably answer “queen”, the same answer we get from embeddings. For more explanation of the underlying theories, see Distributional semantics.

The 2D map analogy was a nice stepping stone for building intuition but now we need

to cast it aside, because embeddings operate in hundreds or thousands

of dimensions. It’s impossible for us lowly 3-dimensional creatures to

visualize what “distance” looks like in 1000 dimensions. Also, we don’t know

what each dimension represents, hence the section heading “Very weird

multi-dimensional space”.5 One dimension might represent something

close to color. The king - man + woman ≈ queen anecdote suggests that these

models contain a dimension with some notion of gender. And so on.

Well Dude, we just don’t know.

The mechanics of converting text into very weird multi-dimensional space are complex, as you might imagine. They are teaching machines to LEARN, after all. The Illustrated Word2vec is a good way to start your journey down that rabbithole.

5 I borrowed this phrase from Embeddings: What they are and why they matter.

Comparing embeddings

After you’ve generated your embeddings, you’ll need some kind of “database” to keep track of what text each embedding is associated to. In the experiment discussed later, I got by with just a local JSON file:

{

"authors": {

"embedding": […]

},

"changes/0.1": {

"embedding": […]

},

…

}

authors is the name of a page. embedding is the embedding for that page.

Comparing embeddings involves a lot of linear algebra. I learned the basics from Linear Algebra for Machine Learning and Data Science. The big math and ML libraries like NumPy and scikit-learn can do the heavy lifting for you (i.e. very little math code on your end).

Applications

I could tell you exactly how I think we might advance the state of the art in technical writing with embeddings, but where’s the fun in that? You now know why they’re such an interesting and useful new tool in the technical writer toolbox… go connect the rest of the dots yourself!

Let’s cover a basic example to put the intuition-building ideas into practice and then wrap up this post.

Let a thousand embeddings bloom?

As docs site owners, I wonder if we should start freely providing embeddings for our content to anyone who wants them, via REST APIs or well-known URIs. Who knows what kinds of cool stuff our communities can build with this extra type of data about our docs?

Parting words

Three years ago, if you had asked me what 768-dimensional space is, I would have told you that it’s just some abstract concept that physicists and mathematicians need for unfathomable reasons, probably something related to string theory. Embeddings gave me a reason to think about this idea more deeply, and actually apply it to my own work. I think that’s pretty cool.

Order-of-magnitude improvements in our ability to maintain our docs may very well still be possible after all… perhaps we just need an order-of-magnitude-more dimensions!!