I recompiled an old game from WinXP and PowerPC binaries to Apple Silicon and WASM. And you can too!

You can download and play Chromatron here.

Let’s revive a classic laser puzzle

A colleague used Ghidra to add infinite lives in River Raid, a classic Atari game. I got inspired to give this open-source decompiler by the NSA a try. Flipping one assembly instruction is one thing. But recovering an entire game is another.

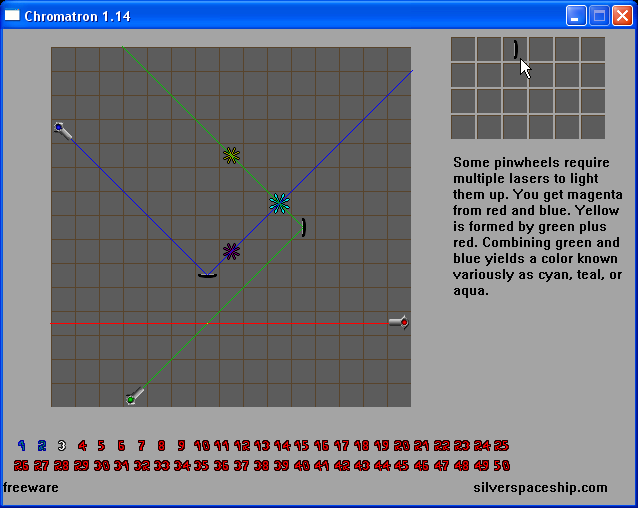

I wanted to start with something small, so there would be a reasonable chance that it works. I went with Chromatron by Sean Barrett (Silver Spaceship Software) - a puzzle game about mirrors and lasers. I like its simplicity. No story, just puzzles. And it gets challenging fast!

A game that I played when doing an internship in quantum optics, and which, along with The Incredible Machine, was my motivation for the Quantum Game with Photons.

Eight years ago, I wanted to replay it. Yet, it was only accessible for PowerPC, an Apple architecture discontinued in 2006 when Apple switched to Intel. And now, we are already 6 years into Apple Silicon.

There is WinXP as well, so the easiest way would be to run in an emulator, but it was not the point.

I had no prior experience with working with binary formats, let alone decompilation - I thought that xxd was a very smiley face. Now I know much more, and you can too - see We hid backdoors in ~40MB binaries and asked AI + Ghidra to find them for overview on the AI-assisted reverse engineering.

Here is my journey with some lessons learnt when vibe-porting this classic masterpiece.

Four approaches

Claude Code and Opus 4.5

First, I started with Claude Code and Opus 4.5. Downloaded binaries, connected to Ghidra. Then, asked to decompile it, and recompile it to Apple Silicon. I rejected suggestions that it would be easier to use an emulator.

I saw not only that it generates some Assembly and C code, but actually that Claude Code writes something about elements! This time I used GhidrAssistMCP.

Something works. So, does it just see a few elements, or is it able to recreate the game? After a few back and forths, the first things started appearing. From the darkness, a few elements emerged. Even at this point, it required a lot of hand-holding. It took some iterations for each piece (board, toolbox, levels, etc.). It took time to actually decode assets.

Yet, it was far from perfect. Many details were way off, and Claude was stubborn about fixing them. Much worse - it often invented things, like different fonts and texts. When asked if they were in the decompiled code, it gleefully said they were not.

Even with beams, it was a bit of a step-by-step process:

Claude Code, m2c, and Opus 4.5

So, I took a different approach. This time using the m2c decompiler to turn PowerPC machine code into C. Maybe this approach would be better - first generate the code, then fix it.

With the second approach, it got the positions of things right on the first try. But it had trouble decoding assets.

It did eventually decode assets correctly, but by that point the first approach had already progressed further. I abandoned this route.

Cursor and GPT-5.2-Codex

I got excited by the news that GPT-5.2-Codex was able to create a browser. While there was some skepticism around that, I decided to give it a go. This time I went with Ghidra, but misconfigured something, so it actually started using the built-in headless mode - which worked better.

It took minimal feedback during 1-2h long sessions. It took some time, but it didn’t pester me with questions constantly, and I couldn’t believe it when I saw it working.

Some things were broken (like the laser beam), but it didn’t take many prompts to fix them - only one or two, not an endless cycle like with the previous model. Wow, it was actually a playable game. Some rough edges here and there.

I implemented a way to compare results with screenshots. LLMs by themselves were very eager to say that something is the same. Only with pixelwise comparison, they had real feedback.

And, step by step, it worked. Using Gemini 3 Pro externally for consulting images helped, but was not enough.

Starting fresh with Opus 4.6

I had almost a month break in the project - primarily for the writeup and charts for BinaryAudit, a recent project on using Ghidra for detecting backdoors in binaries.

A month in human time is ages for AI time. In the meantime, two models came out - Opus 4.6 and GPT-5.3-Codex. Both can be used with a lot of success. I first used GPT-5.3-Codex to slightly polish the C++ version.

Then I decided - why not start from scratch - this time with Opus 4.6. Since the previous attempt didn’t work, I wondered if this one would. I picked Rust this time. I fed it both binaries, but the model chose to work primarily from the Win32 one, using Mac PPC only for cross-referencing.

Aaaand - this time even the first approach worked.

Maybe it helped having some experience with the tech stack. Or because I used Rust. Or because of PyGhidra. But most likely the primary reason is the models themselves.

Reviving most of the game was simpler than expected. Yet, there is this uncanny valley of “almost done”. I was almost there, but there was one step left - the font was off.

A sane approach would be to stop here. Just some minor positioning and font selection differences are visible, and only to people who know the original version.

Anyway, very likely the font was different on the WinXP version (the one I know) and the PowerPC version (the one I didn’t). Using a standard font would be fair game… if it weren’t for my obsession.

Models (all of them) wanted to persuade me it is not worth fighting. I begged to differ.

Models suggested Geneva, MS Sans Serif, sserife.fon and a few other. They kept trying to gaslight me into thinking the font is correct, just at a different resolution. So I vibe-coded a manual tool to compare fonts with the reference, without a need to recompile everything. Turns out that it was VGASYS all along — the Windows SYSTEM_FONT, the default GDI font when no font is explicitly selected.

So, here we go - 307,199 out of 307,200 pixels identical to the original. Not bad! The single remaining pixel is a render-order difference between the laser beam and grid overlay.

This is a a screenshot of from my Apple Silicon Macbook. All that’s is different is the system-depended app frame. Compiled it as well for Windows, Linux and WASM.

Summary of approaches taken

Lessons learnt

New models, new possibilities. Sometimes new models make not just things slightly better, but open entirely new routes.

The stronger the model, the less hand-holding it needs. One model provides little help, another - a bit, yet another - basically does it end-to-end.

Hallucinations are worse than a lack of an answer. A model inventing nonexistent code details is more harmful than admitting ignorance.

Define your goals and non-goals. It makes a difference if you want a pixel-perfect recompilation, a remaster, or a remake.

References are gold. Both for you (memory can be faulty) and for the AI.

AI can learn to use Ghidra on its own. Setting up Ghidra MCP was painstaking and fragile. In one attempt, I misconfigured MCP — and the model simply used Ghidra’s built-in headless mode instead, which worked better. With PyGhidra, it was even smoother.

Models excel at code, but not at visual inspection. If there are visible differences (e.g. an small element is RED, but should be BLACK), a model will gleefully say that there are no differences, or that there are not important.

Pick the convenient target language. Decompiled code is pseudo-C, so one may think that C is the best target. For a human - likely. LLMs are wonderful translator, and they can turn into any language they know. My go-to here is Rust, as it is still low-level, but more concise and safer, for humans and machines alike. And with good tooling to build to other systems.

Pick a popular rendering framework. Simple DirectMedia Layer (SDL) worked the best. Even though SDL3 is the newest, AI went for SDL2 due to more mature binding.

Change your rendering framework if needed. I ended up dropping SDL2 in favor of pure-Rust winit+softbuffer, which eliminated C dependencies and made the WASM build straightforward.

Some tricky parts go easily (e.g. assets in obscure format).

Some small things are surprisingly hard. I spent most of the time on trying to make the font the same.

Don’t be afraid to start over. One of the common pitfalls is the sunk cost fallacy - you already picked a framework, started, so you want to polish. But with AI sometimes it is better to start over, knowing what you know. Think of that as a roguelike game, in which you die, then start over - but richer by knowledge.

Pixel-perfect clones make you notice everything. Small feature, design choices, UI standards of a given era; but also things that are glitches, misalignments or otherwise could be improved.

Perspective

Vibe-decompiling is taking off

The Chromatron binary is just 40kB compressed (and 96kB unpacked). With bigger code it might be harder, but people do that. With even newer models, which are better at such tasks, it will be more reasonable.

A lot of things happen here. Christopher Ehrlich ports SimCity from C into TypeScript with 5.3-Codex or The Long Tail of LLM-Assisted Decompilation.

A month ago there was a Hacker News thread Show HN: Ghidra MCP Server – 110 tools for AI-assisted reverse engineering, and a two weeks a comment a Rust CLI for Ghidra in our We hid backdoors in ~40MB binaries and asked AI + Ghidra to find them discussion.

See also a wonderful writeup Resurrecting Crimsonland by banteg on decompiling a 2003 top-down shooter, with a very clear goal to be faithful:

the goal is a complete rewrite that matches the original windows binary behavior exactly. if the original has a bug, the rewrite has the same bug. if there’s a texture that’s one pixel too small (there is), i replicate that too. the executable is the spec, and we’re writing the spec back into source code.

I guess it needs a name.

A word of caution

If you deal with decompilation, be aware that AI guardrails. Passing disassembled code to an LLM might get your request shadow-redirected, e.g. GPT-5.3-Codex silently downgrading to GPT-5.2 or even your account flagged (as happened to a friend). AI labs try to prevent their models from being used for malware, but they understand the context better that they did 6 months ago.

Their precautions are understandable, but it is definitely something to keep in mind when you embark on your own reverse-engineering adventures.

What’s next

I think we can go further. First, reviving games and old pieces of software that are not playable on your hardware. Or porting games to other devices - a grueling and prohibitively expensive task - one of the reasons that most games are not publish for macOS.

The current approach is to wrap everything in Docker, Electron, or an emulator. And for example, while another game, Supaplex weights 287 kB, its Steam remaster weights around 200 MB.

But now, we can go beyond - and natively port to another system. While still there is effort involved (and a lot of love to pay attention to tiny details), it is no longer a many-month project restricted for a seasoned reverse engineer. We we get both performance, and size, close to the original.

What’s now

In the meantime, you can play my recompiled Chromatron online! The web version saves your progress automatically.

Which game (or maybe other piece of software) would you like to bring back to life?