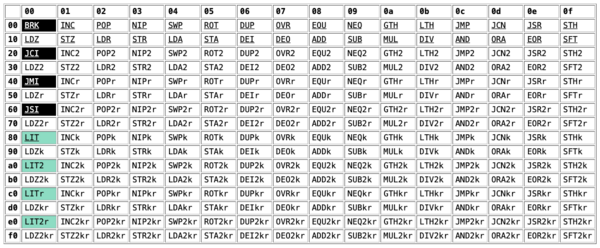

Uxn is a fictional CPU, used as a target for various applications in the Hundred Rabbits ecosystem. It's a simple stack machine with 256 instructions:

My implementation of the Uxn CPU now has an x86-64 assembly implementation, which is about twice as fast as my Rust implementation. This required porting about 2000 lines of ARM64 assembly to x86-64, which was accomplished with the help of a robot buddy.

Let me provide a little more context.

A few years back, I wrote a Rust implementation of the CPU and peripherals, which was 10-20% faster than the reference implementation. For more background info, see that project's writeup:

The Rust implementation is fast, but suffers from the usual downsides of a bytecode-based VM: the main dispatch statement is an unpredictable branch.

I then wrote an assembly implementation of the interpreter, which proved to be about 30% faster than the Rust version. This was hard: it took several days of work, and there were lingering bugs that I didn't discover until I added a fuzz tester to check for discrepancies between the Rust and assembly implementation.

The assembly implementation is written for an ARM64 target, for two reasons:

- I'm working on an ARM Macbook

- Writing ARM assembly by hand is a fun intellectual exercise because the ISA is pleasantly orthogonal and well-organized, while x86 assembly is... less so

My blog post about the assembly implementation concludes with an optimistic statement:

On a brighter note, it should be relatively easy to port all of the assembly code to x86-64, but I'll leave that as a challenge for someone else!

I wrote that back in late 2024, and no one had yet risen to the challenge, so I decided to do it (kinda) myself. Because this is early 2026, you may know where this is going: the first draft was written autonomously by Claude Code.

Yes, that's right – it's finally my turn to test out the hip new coding agents on a problem that I know relatively well.

(This blog post was 100% written by me, a fleshy human, because I think that passing off AI-written text as human-authored is an insult to the reader)

How did it do?

In short, it did a great job of going from "zero to one": if I was given a blank text editor and asked to write the x86 implementations of every Uxn opcode, I would have done much worse.

The resulting implementation worked, passing both my unit tests and the fuzzer.

This was all basically autonomous: I deliberately did not help the agent with any implementation or debugging details, limiting my feedback to high-level strategy.

The assembly itself was of middling quality – and I then spent a while improving it – but the agent provided an invaluable boost of momentum to kick off the work.

The whole thing cost about $29, billed through an enterprise plan. I'm not sure how this would have gone with an unmetered plan, e.g. whether I would have hit usage limits midway through the process.

The implementation took a few hours of work, but only 15-20 minutes of hands-on time; the main speed limit was me noticing that it was waiting for approval to run a new command.

(This was all running on a disposable Oxide Computer VM,

so I probably should have just run it with --dangerously-skip-permissions)

The implementation process

I started by giving the agent an overview of the problem and a description of my existing implementation:

The

raven-uxnproject implements a fictional CPU. There are two implementations: a safe Rust implementation, and a native code implementation. In the native implementation, we have hand-written assembly functions for each of the 256 opcodes, written with tail recursion so each instruction jumps to the next instruction. This is fast because there's no big case statement dispatching. However, the x86 implementation isn't yet working. Get it working: it should build withcargo build --features=native.

It successfully added an x86 assembly backend and got it compiling, which

required a few rounds of tweaking the assembly syntax and re-running cargo build. At this point, I told the agent how to run unit tests:

Now that it's building, it should pass tests with

cargo test -praven-uxn --features=native.

The agent found a bunch of failing unit tests, and fixed them

mostly-autonomously. My contribution at this stage was telling it to fix one

opcode at a time, because it was trying to devise a Grand Plan to fix every

single failing test in one pass, and going off the rails. Because failures

typically presented as SIGSEGV intead of assertion errors, I also instructed

the agent to run tests one by one – otherwise, it was impossible to tell which

specific opcode test caused the segfault.

Finally, once tests were passing, I told the agent how to run the fuzzer:

Great, now let's test it with fuzzing. You can run

cargo +nightly fuzz run --release fuzz-native -- -max_total_time=10 -verbosity=0to fuzz for 10 seconds and see if it finds anything.

A bunch more failures cropped up at this point, and it continued to debug them

autonomously. Interestingly, at this point it started writing ad-hoc gdb

scripts to probe the interpreter's internal state:

break _DEI

commands

info registers rsp rbp

x/4gx $rsp+0xd0

x/4gx $rsp+0xd8

x/4gx $rsp+0xe0

cont

end

break raven_uxn::native::dei_entry

run --test-threads=1 dei

info registers rdi rsi

x/4gx $rsi

p *(raven_uxn::native::DeviceHandle*)$rsi

The internal thinking traces are a fascinating artifact; the thing debugs like a goldfish with logorrhea:

The comment says "orig - 8 - 48 - 0x98 = orig - 0xd0" and then "[orig+8] = [rsp + 0xd0 + 8] = [rsp + 0xd8]". But this is wrong. If "orig" = rsp BEFORE the call (i.e., rsp_caller), then: 7th arg is at [orig - 8] BEFORE the call (pushed last by caller)... no, in SysV x86_64:

Actually the comment's definition of "orig" is inconsistent. Let me figure out what "orig" means in the comment:

So "orig" is the reference point where the return address sits at [orig - 8], the 7th argument at [orig + 8], and the 8th argument at [orig + 16]. This matches the x86_64 SysV ABI where after the call instruction, the stack layout has the return address at the top and the caller's arguments positioned above it. I'm working through the stack frame layout and realizing the comment's offset claims don't align with the actual memory addresses—the math just doesn't check out. But from the debugger output, I can see the 7th argument (vm) is actually at [rsp+0xd...], which gives me the concrete data I need to move forward.

After a bunch more round-trips through the fuzzer, it stopped finding bugs and just started running the fuzzer for longer and longer periods. After 60 seconds of fuzzing with no hits, I declared success.

Why did this work?

This won't be a surprising sentiment if you've read blog posts of this nature: the agent worked well because there was a comprehensive test suite and a fuzzing harness, so it could easily close the loop.

The first implementation did not compile; once it compiled, it did not pass unit tests; once it passed unit tests, it did not pass fuzz testing. Having all of these layers of (machine-checkable) tests was necessary to get a fully working implementation.

I suspect it also worked because the problem is translation flavored: there was a full ARM64 assembly implementation, and translating from one assembly flavor to another is easier than writing it from a high-level specification (or even from the Rust code).

How was the code?

I'm not an x86 assembly expert, but even I could tell that there were a few questionable decisions. Let me give you a few examples.

Claude seemed to get caller / callee registers confused: it properly handled callee-saved registers in the function prologue and epilogue, but also insisted on saving them before doing a call to an external function. This increased stack usage and added a bunch of unnecessary instructions to each external function call:

; Save all interpreter state to the stack frame and set up args for C call

; C calling convention: arg1=rdi (VM ptr), arg2=rsi (DeviceHandle ptr)

.macro precall

; Write stack indices back through the pointers saved at entry

mov rax, qword ptr [rsp + 0x30] ; stack_index pointer

mov byte ptr [rax], r12b

mov rax, qword ptr [rsp + 0x38] ; ret_index pointer

mov byte ptr [rax], r14b

; Save interpreter registers

; [Human note: all of these are callee-saved!]

mov qword ptr [rsp + 0x58], rbx

mov qword ptr [rsp + 0x60], r12

mov qword ptr [rsp + 0x68], r13

mov qword ptr [rsp + 0x70], r14

mov qword ptr [rsp + 0x78], r15

mov qword ptr [rsp + 0x80], rbp

; Set up args: VM ptr and DeviceHandle ptr

mov rdi, qword ptr [rsp + 0x40]

mov rsi, qword ptr [rsp + 0x48]

.endm

It was also obsessed with using eax for everything, to its own detriment!

The functions would often shuffle data into eax initially, then move it to a

different register to make room for putting more data into eax. In the

System-V ABI, there are nine scratch registers available, and I found that I

could often tighten the code by using them:

; Claude's initial DIV2 implementation

;

; Note that it pushes to the x86 stack because

; it keeps using rax for temporary values!

_DIV2:

movzx eax, byte ptr [rbx + r12]

stk_pop

movzx ecx, byte ptr [rbx + r12]

stk_pop

shl ecx, 8

or eax, ecx ; b (divisor, top short)

movzx ecx, byte ptr [rbx + r12]

stk_pop

movzx edx, byte ptr [rbx + r12]

shl edx, 8

or ecx, edx ; a (dividend, second short)

; 16-bit unsigned divide: a / b

push rax ; save divisor (b) onto x86 stack [?!]

mov eax, ecx ; dividend (a) in eax

movzx eax, ax

xor edx, edx

pop rcx ; restore divisor into ecx

movzx ecx, cx

test cx, cx

jz 1f

div cx ; ax = a / b

jmp 2f

1:

xor eax, eax ; div by zero → 0

2:

movzx r8d, al ; save result_lo

shr eax, 8

mov byte ptr [rbx + r12], al ; store result_hi at current pos

stk_push r8b ; push result_lo on top

next

;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;;

; Version tweaked by human

;

; This version loads the divisor / dividend directly into ecx and eax

_DIV2:

movzx ecx, byte ptr [rbx + r12]

stk_pop

movzx r9d, byte ptr [rbx + r12]

stk_pop

shl r9d, 8

or ecx, r9d ; ecx = b (divisor)

movzx eax, byte ptr [rbx + r12]

stk_pop

movzx edx, byte ptr [rbx + r12]

shl edx, 8

or eax, edx ; eax = a (dividend), already zero-extended

; 16-bit unsigned divide: a / b

xor edx, edx

test cx, cx

jz 1f

div cx ; ax = a / b

jmp 2f

1:

xor eax, eax ; div by zero → 0

2:

mov r8b, al ; save result_lo

shr eax, 8

mov byte ptr [rbx + r12], al ; store result_hi

stk_push r8b

next

Finally, Claude was hesistant to use 8 and 16-bit operations, preferring to use 32-bit operations then mask the results. This behavior is likely a legacy of translating the ARM assembly, which used the "operation then mask" pattern everywhere because the ISA does not have instructions for 8 or 16-bit wrapping arithmetic.

These idiosyncracies made a difference, squeezing another non-trivial speedup out of the test ROMs that I was benchmarking:

| Fibonacci | Mandelbrot | |

|---|---|---|

| Rust | 4.28 ms | 341 ms |

| x86 (initial) | 2.45 ms | 213 ms |

| x86 (improved) | 1.70 ms | 187 ms |

One caveat applies: this was using Sonnet 4.6, and it's possible that Opus 4.6 would do a better job out of the gate. I also didn't yet have a closed-loop harness for performance testing, so I couldn't just tell the AI to make it faster.

Debugging a human-introduced bug

After doing all of this human cleanup, the fuzzer found a crash:

INFO: Running with entropic power schedule (0xFF, 100).

INFO: Seed: 3840651835

INFO: Loaded 1 modules (4523 inline 8-bit counters): 4523 [0x55e78ed1fcd0, 0x55e78ed20e7b),

INFO: Loaded 1 PC tables (4523 PCs): 4523 [0x55e78ed20e80,0x55e78ed32930),

fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native: Running 1 inputs 1 time(s) each.

Running: ../foo.rom

=================================================================

==2999==ERROR: AddressSanitizer: attempting free on address which was not malloc()-ed: 0x7ede9fd08800 in thread T0

#0 0x55e78ec25556 (/home/ubuntu/raven/fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native+0x10e556) (BuildId: 0a135d2c356e27bb9ccb7046833c897d032c9b50)

#1 0x55e78ec7a16e (/home/ubuntu/raven/fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native+0x16316e) (BuildId: 0a135d2c356e27bb9ccb7046833c897d032c9b50)

#2 0x55e78ec7bf41 (/home/ubuntu/raven/fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native+0x164f41) (BuildId: 0a135d2c356e27bb9ccb7046833c897d032c9b50)

#3 0x55e78ec84ae8 (/home/ubuntu/raven/fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native+0x16dae8) (BuildId: 0a135d2c356e27bb9ccb7046833c897d032c9b50)

#4 0x55e78ec85448 (/home/ubuntu/raven/fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native+0x16e448) (BuildId: 0a135d2c356e27bb9ccb7046833c897d032c9b50)

#5 0x55e78ec8422d (/home/ubuntu/raven/fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native+0x16d22d) (BuildId: 0a135d2c356e27bb9ccb7046833c897d032c9b50)

#6 0x55e78ec8b9d5 (/home/ubuntu/raven/fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native+0x1749d5) (BuildId: 0a135d2c356e27bb9ccb7046833c897d032c9b50)

#7 0x55e78eca66d6 (/home/ubuntu/raven/fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native+0x18f6d6) (BuildId: 0a135d2c356e27bb9ccb7046833c897d032c9b50)

#8 0x55e78ecaf002 (/home/ubuntu/raven/fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native+0x198002) (BuildId: 0a135d2c356e27bb9ccb7046833c897d032c9b50)

#9 0x55e78eccd4f6 (/home/ubuntu/raven/fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native+0x1b64f6) (BuildId: 0a135d2c356e27bb9ccb7046833c897d032c9b50)

#10 0x7fcea07fdd8f (/lib/x86_64-linux-gnu/libc.so.6+0x29d8f) (BuildId: 095c7ba148aeca81668091f718047078d57efddb)

#11 0x7fcea07fde3f (/lib/x86_64-linux-gnu/libc.so.6+0x29e3f) (BuildId: 095c7ba148aeca81668091f718047078d57efddb)

#12 0x55e78eb98a24 (/home/ubuntu/raven/fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native+0x81a24) (BuildId: 0a135d2c356e27bb9ccb7046833c897d032c9b50)

0x7ede9fd08800 is located 16384 bytes after 65536-byte region [0x7ede9fcf4800,0x7ede9fd04800)

allocated by thread T0 here:

#0 0x55e78ec259c9 (/home/ubuntu/raven/fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native+0x10e9c9) (BuildId: 0a135d2c356e27bb9ccb7046833c897d032c9b50)

#1 0x55e78ec801fc (/home/ubuntu/raven/fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native+0x1691fc) (BuildId: 0a135d2c356e27bb9ccb7046833c897d032c9b50)

#2 0x55e78ec79954 (/home/ubuntu/raven/fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native+0x162954) (BuildId: 0a135d2c356e27bb9ccb7046833c897d032c9b50)

#3 0x55e78ec7bf41 (/home/ubuntu/raven/fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native+0x164f41) (BuildId: 0a135d2c356e27bb9ccb7046833c897d032c9b50)

#4 0x55e78ec84ae8 (/home/ubuntu/raven/fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native+0x16dae8) (BuildId: 0a135d2c356e27bb9ccb7046833c897d032c9b50)

#5 0x55e78ec85448 (/home/ubuntu/raven/fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native+0x16e448) (BuildId: 0a135d2c356e27bb9ccb7046833c897d032c9b50)

#6 0x55e78ec8422d (/home/ubuntu/raven/fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native+0x16d22d) (BuildId: 0a135d2c356e27bb9ccb7046833c897d032c9b50)

#7 0x55e78ec8b9d5 (/home/ubuntu/raven/fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native+0x1749d5) (BuildId: 0a135d2c356e27bb9ccb7046833c897d032c9b50)

#8 0x55e78eca66d6 (/home/ubuntu/raven/fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native+0x18f6d6) (BuildId: 0a135d2c356e27bb9ccb7046833c897d032c9b50)

#9 0x55e78ecaf002 (/home/ubuntu/raven/fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native+0x198002) (BuildId: 0a135d2c356e27bb9ccb7046833c897d032c9b50)

#10 0x55e78eccd4f6 (/home/ubuntu/raven/fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native+0x1b64f6) (BuildId: 0a135d2c356e27bb9ccb7046833c897d032c9b50)

#11 0x7fcea07fdd8f (/lib/x86_64-linux-gnu/libc.so.6+0x29d8f) (BuildId: 095c7ba148aeca81668091f718047078d57efddb)

SUMMARY: AddressSanitizer: bad-free (/home/ubuntu/raven/fuzz/target/x86_64-unknown-linux-gnu/release/fuzz-native+0x10e556) (BuildId: 0a135d2c356e27bb9ccb7046833c897d032c9b50)

==2999==ABORTING

(to be clear, this was something that I introduced when refactoring)

Out of the gate, this already has "nightmare bug" vibes: the fuzzer isn't

failing because the interpreter and assembly implementation diverged in

behavior, but due to an AddressSanitizer failure when freeing memory! I've

seen this before, and it's never fun: it means that something in the assembly

implementation is stomping over unrelated memory.

This was also a case of the fuzzer getting very, very lucky: it found this bug

once, but in subsequent tests (with the bug still present) ran for hours without

finding it again. (While debugging, I was seriously starting to wonder if it

was a bug in libfuzzer itself)

The program that triggers this crash isSUB EQUk STZ2k ROT2 EQUr EORkr GTHkr SUB JCN2r

Its behavior isn't obvious to me, and it's surprisingly non-trivial. When the

program terminates, the return stack is full of alternating 1 and 0, and the

data stack has a more complex pattern of values – 120 zeros, then the following:

[0x00, 0x00, 0x00, 0x12, 0x12, 0x00, 0x00, 0xee,

0x12, 0x12, 0x11, 0x11, 0x12, 0x11, 0x11, 0x11,

0x11, 0x10, 0x10, 0x11, 0x10, 0x10, 0x10, 0x10,

0x0f, 0x0f, 0x10, 0x0f, 0x0f, 0x0f, 0x0f, 0x0e,

0x0e, 0x0f, 0x0e, 0x0e, 0x0e, 0x0e, 0x0d, 0x0d,

0x0e, 0x0d, 0x0d, 0x0d, 0x0d, 0x0c, 0x0c, 0x0d,

0x0c, 0x0c, 0x0c, 0x0c, 0x0b, 0x0b, 0x0c, 0x0b,

0x0b, 0x0b, 0x0b, 0x0a, 0x0a, 0x0b, 0x0a, 0x0a,

0x0a, 0x0a, 0x09, 0x09, 0x0a, 0x09, 0x09, 0x09,

0x09, 0x08, 0x08, 0x09, 0x08, 0x08, 0x08, 0x08,

0x07, 0x07, 0x08, 0x07, 0x07, 0x07, 0x07, 0x06,

0x06, 0x07, 0x06, 0x06, 0x06, 0x06, 0x05, 0x05,

0x06, 0x05, 0x05, 0x05, 0x05, 0x04, 0x04, 0x05,

0x04, 0x04, 0x04, 0x04, 0x03, 0x03, 0x04, 0x03,

0x03, 0x03, 0x03, 0x02, 0x02, 0x03, 0x02, 0x02,

0x02, 0x02, 0x01, 0x01, 0x02, 0x01, 0x01, 0x01,

0x01, 0x00, 0x00, 0x01, 0x00, 0x00, 0x00, 0x00]

It's clearly executing some kind of looping algorithm before it terminates.

To make matters worse, the program also runs fine using the raven-cli

executable; the fuzzer gets (un)lucky that the program stomps on RAM that is

monitored by AddressSanitizer.

I sicced Claude (Sonnet 4.6) on this, but it mostly spun its wheels; it's hard to tell whether that's because it wasn't making forward progress, or whether Anthropic's servers were particularly overloaded that day.

Eventually, I tracked it down myself: it was an out-of-bounds write of 0 in

the STR instruction, which wrote to an address before the start of the VM's

RAM. The correct location in the VM's RAM was already 0, so the interpreter

and assembly implementation didn't diverge.

There was also a second reason the bug was so hard: as you may notice, STR

wasn't in the program! The bytecode program writes data to RAM, then jumps to

that address; the VM then treats that data as further bytecode.

Quick aside: The easy way to debug this issue

I spent a while doing printf debugging, which was not the best way to do it;

as it turns out, Valgrind finds the out-of-bounds

write, even when running the (seemingly-fine) raven-cli:

==61880== Invalid write of size 1

==61880== at 0x41D4E4B: ??? (in /home/ubuntu/raven/target/release/raven-cli)

==61880== Address 0x4cefbf0 is 16 bytes before a block of size 65,536 alloc'd

==61880== at 0x4A8C36C: calloc (vg_replace_malloc.c:1678)

==61880== by 0x41D8866: alloc_zeroed (alloc.rs:178)

==61880== by 0x41D8866: alloc_impl_runtime (alloc.rs:190)

==61880== by 0x41D8866: alloc_impl (alloc.rs:312)

==61880== by 0x41D8866: allocate_zeroed (alloc.rs:435)

# etc, etc

However, the address space is nonsense (0x41D4E4B).

It's then possible to combine it with GDB, by starting Valgrind with

$ valgrind --vgdb=yes --vgdb-error=0 ./target/release/raven-cli --native ../foo.rom

Then, from within GDB:

(gdb) target remote | /snap/valgrind/181/usr/libexec/valgrind/../../bin/vgdb

Remote debugging using | /snap/valgrind/181/usr/libexec/valgrind/../../bin/vgdb

relaying data between gdb and process 61880

Reading symbols from /lib64/ld-linux-x86-64.so.2...

Reading symbols from /usr/lib/debug/.build-id/8c/fa19934886748ff4603da8aa8fdb0c2402b8cf.debug...

0x000000000425c290 in _start () from /lib64/ld-linux-x86-64.so.2

(gdb) cont

Continuing.

Program received signal SIGTRAP, Trace/breakpoint trap.

0x00000000041d4e4b in _STR ()

(gdb) disas

Dump of assembler code for function _STR:

0x00000000041d4e39 <+0>: movsbq (%rbx,%r12,1),%rax

0x00000000041d4e3e <+5>: dec %r12b

0x00000000041d4e41 <+8>: mov (%rbx,%r12,1),%cl

0x00000000041d4e45 <+12>: dec %r12b

0x00000000041d4e48 <+15>: add %rbp,%rax

=> 0x00000000041d4e4b <+18>: mov %cl,(%r15,%rax,1)

0x00000000041d4e4f <+22>: movzbl (%r15,%rbp,1),%eax

0x00000000041d4e54 <+27>: inc %bp

0x00000000041d4e57 <+30>: lea 0x651a2(%rip),%rcx # 0x423a000

0x00000000041d4e5e <+37>: jmp *(%rcx,%rax,8)

End of assembler dump.

This is dead on, and would have saved me a few hours of frustration!

(For what it's worth, I'm comfortable with both Valgrind and GDB, but didn't know how to combine them; Claude Web helpfully provided the right commands)

Second aside: Hitting it with a bigger model

Out of curiosity, I reintroduced the bug into the codebase and threw Opus 4.6

(1M context window) at it, with --dangerously-skip-permissions:

raven-uxn implements several interpreters for the Uxn virtual machine. There's one implementation in Rust, but the interesting ones are in raw assembly. Writing raw assembly improves performance because we can write threaded code, where each instruction jumps directly to the next (Rust can't do this because it lacks guaranteed tail recursion). Anyways, I've been having a rare issue with the x86 assembly backend: one particular program sequence fails in fuzzing. The program sequence is the following opcodes:

SUB EQUk STZ2k ROT2 EQUr EORkr GTHkr SUB JCN2r. When run in the fuzzer, this triggers an AddressSanitizer error. Interestingly, it does not trigger a check for discrepencies between the interpreter and assembly implementations, so it's producing the correct behavior (or incorrect behavior that doesn't change the end state of the VM). You can reproduce this withcargo +nightly fuzz run --release fuzz-native foo.rom. Your mission is to track down whatever is causing this issue.

I then went upstairs to make myself a cup of tea.

When I came back downstairs (after about 10 minutes), it had not solved the problem; indeed, it took a whole 18 minutes to figure it out. Along the way, it fixed five other instances of the incorrect pattern, which I hadn't noticed.

All of this — and subsequent semi-automated cleanups — cost another $25.

Opus did okay but not great at automated cleanup ("find all cases where we do a 32-bit load but only use the lowest 8 bits, and replace them with 8-bit loads"). It would often declare that it had fixed everything, only for me to find more examples of the undesirable pattern.

Is raven-uxn slop now?

I don't know, you tell me – ideally on social media, with personal insults and imprecations about my character!

Back in 2024, if someone had taken me up on my suggestion to write an x86 backend and had opened a PR with the same code that Claude delivered, I would have given it a similar amount of review / editing before merging it in.

(Honestly, I would have made fewer changes to a human PR, because I'm sensitive to completely ripping up someone's work; the diff from the original agent's implementation is substantial)

Is that implementation irrevocably tainted by its source, even after my edits?

A second perspective: if someone in 2026 had opened a PR with this same code and told me that Claude wrote it, I probably wouldn't have merged it – I don't trust strangers to apply the same level of engineering rigor when using LLMs.

Finally, this wouldn't have gotten done without Claude Code: I've got too much else to do, and the activation energy was too high. Is lowering energy barriers worth polluting the cognitive ecosystem with out-of-distribution entities?

What's next?

The PR is now merged, and a new

0.2.0 release is on the way.

This experience hasn't made me a vibe-coding maximalist; I find that the act of writing code myself is necessary to build the mental model of a complex system, and concerns about cognitive debt ring true to me.

However, I was impressed by Opus 4.6's ability to debug the subtle assembly bug, and will consider reaching for it in the future. There's an old aphorism that debugging requires being as twice as clever as writing the code initially, so if you write code that's at your cleverness limit, you won't be able to debug it; if LLMs help with debugging, it frees me up to write more clever code!

(and I wish people would stop arguing that these tools don't work)